Valentine’s Day Is The Perfect Time To Try These Dating Sims

Dating games are usually simulations, although there are plenty of games in all other genres that give you a option to date characters. This is usually an optional part of those games, and one that is not required to reach the ending. However, dating games focus on dating, even if you avoid choosing romantic options, which kind of defeats the point anyway.

17 Best Cottagecore Indie Games, Ranked

No duds, no filler—just the best cottagecore games, full of beauty, depth, and cozy surprises.

With Valentine’s Day coming up, it’s the perfect time to explore virtual love through dating games. These games range from fun and exciting to funny and scary in some cases. In a way, there is a dating sim for every type of player.

5

Dream Dad

Find Love as a Single Father

Dream Daddy is an emotional story. You play the single father of your adopted daughter, who believes it might be time for you to try to find love again. Opening is a a bitter feelingas you learn that your ex-partner, who also raised Amanda, has died in the past.

The game follows the standard dating sim formula. As you go about your daily life, you meet potential love interests. What interest you get depends on the choices you make, and how interested they are in you depends on what you choose in the conversation options with them. Each character has a unique personality that reveals details that you relate to the most.

4

It is connected to You

Dead by Daylight has been reimagined as a Dating Sim

This is a spinoff that shouldn’t work, but somehow it works in the best of ways. Hooked on You is a spinoff of Dead by Daylight, which is an unbalanced horror game. However, PSyop has decided to adopt enemies that you can choose to play as in single player as well make them love interests in the new game.

Instead of horror, you get to see the monsters of Dead by Daylight in a new light with Hooked on You. Although they may scare you in the first game, they come off as fun and flirty in this dating sim version of their lives. It’s fun, light-hearted, and not at all what you’d expect from a Dead by Daylight game.

3

I love you, Colonel Sanders

Fried Chicken Can’t Steer You Wrong

Maybe you don’t want to buy a dating sim, but are willing to try one for Valentine’s Day in the spirit of love. If so, I love you, Colonel Sanders is a good choice. KFC worked with PSyop to create a dating sim around the face of fried chicken, Colonel Sanders, and the secret recipe that would make him famous.

10 Best Games With Lots of Romance Options

You are in love!

Maybe it’s the magic of the PSyop development team, but I Love You, Colonel Sanders is incredibly entertaining. The characters are fun, if over the top at times, and your professor is a dog. It’s rare, but because of that, it’s something It’s refreshing to take a dating sim that you wouldn’t expect to see. Between this game and options like Hooked on You, it seems that unexpected places are what make the best dating sims.

2

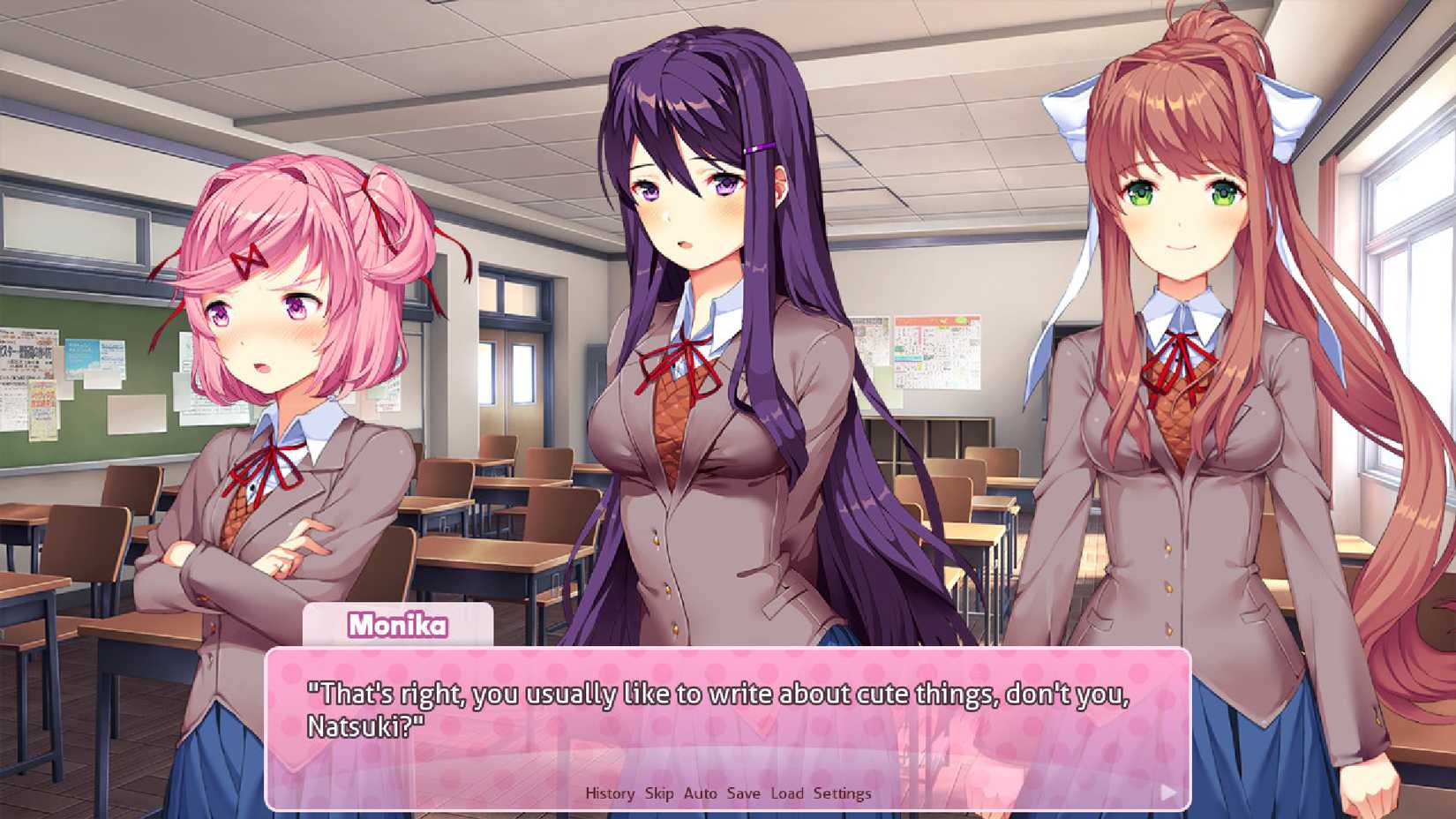

Doki Doki Literature Club

The Hidden Horror Behind Dating

This game is a classic dating sim, but it’s also a topic that comes up when discussing different horror games. What seems like an average dating sim turns dark in Doki Doki Literature Club. You feel like you are playing a A typical high school romance visual novel at the beginningbut it gets increasingly uncomfortable as it turns into a psychological horror game.

They are warnings throughout the game that something is wrong, and it’s meta in the way that the characters you talk to aren’t talking to your character, but rather you who are behind the screen and playing the game. It grows in discomfort the more times you play it, and it turns out that the only way to win is to delete certain files from the game area on your computer.

1

Monster Prom

A Dating Sim To Play With Friends

Monster Prom is one of the few dating sims that lets you play co-op. You can invite three of your friends to join you in this supernatural high school romance. The goal is to get an advertising date, but that’s easier said than done, especially when you’re working on a deadline.

Another unique feature of this game is that you have to increase your stats and improve your character increase your chances of getting an upcoming prom date. You don’t usually think of dating games as the kind to play with friends, but it makes the Monster Prom experience so much fun. If you’ve played Doki Doki Literature Club, you might need to run to Monster Prom to lift your spirits.

Dating games can be a fun genre, but they’re also the most wasted genre of fast-paced games and games that are incredibly graphic in their content. Therefore, it is difficult to find balanced dating games the importance of building relationships by having a story and a personality for your character and the characters you share with throughout your journey. Fortunately, there are still past games and new options from time to time that show that well-crafted dating sims can stay with you long after you finish them.

10 Most Confusing Licensed Games

When they are completely willing to fight the game without IP, even if it doesn’t make sense.