Response Bias? How AI Adjusts Answers Based on Race and Gender, Study Finds

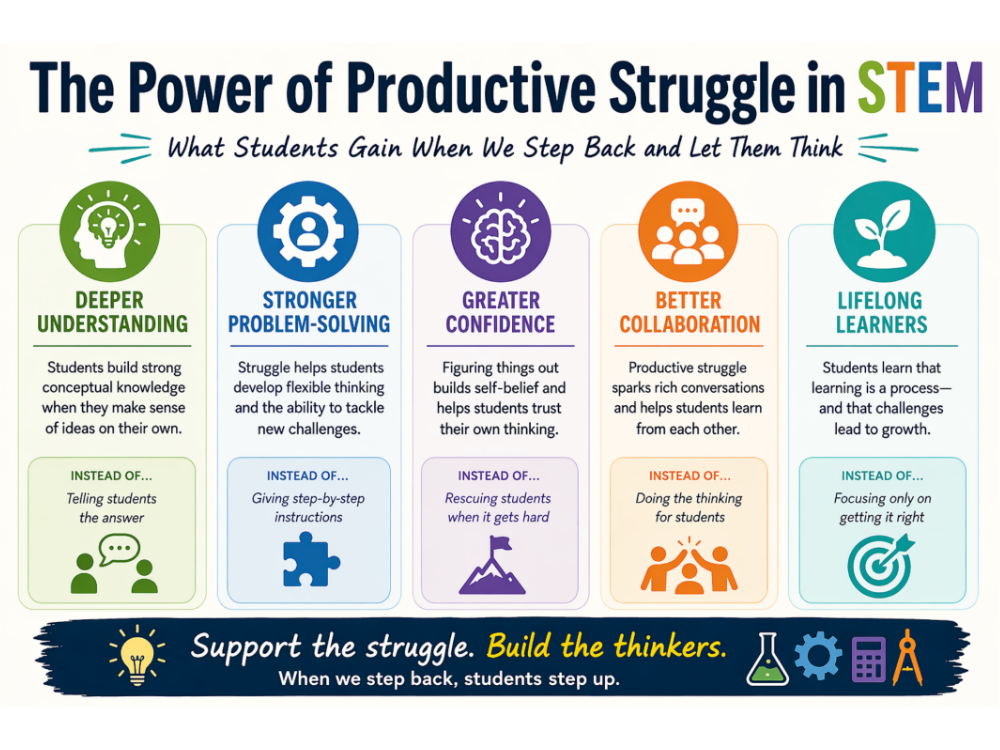

The AI models spoke to female students more affectionately and used first-person pronouns. (“I love your confidence in expressing your opinion!”) Students who are called enthusiastic are met with great encouragement. In contrast, students who are described as high achievers or motivated are more likely to receive specific, critical suggestions aimed at refining their work.

Different words for different readers

In other words, the AI response was different both in tone and in what it expected from the reader. The paper, “Annotated Lessons: Examining Linguistic Bias in Human Automatic Writing,” has not yet been published in a peer-reviewed journal, but it was selected as the best paper at the 16th International Learning Analytics and Knowledge Conference in Norway, where it is scheduled to be presented on April 30.

The researchers described the feedback results as showing “positive response bias” and “avoidant response bias” – giving more praise and less criticism to other groups of students. While differences in any one piece of feedback may be hard to see, patterns are evident across hundreds of articles.

Researchers believe that AI is changing its response to similar issues because the models have been trained on a large amount of human language. Humane teachers can also moderate criticism when responding to students from certain backgrounds, sometimes because they don’t want to appear unfair or discouraging. “They continue to bias the people who express it,” said Mei Tan, the study’s lead author and a doctoral student at the Stanford Graduate School of Education.

At first glance, the difference in response may not seem dangerous. More encouragement can boost a student’s confidence. Many educators argue that culturally responsive teaching — recognizing students’ identities and experiences — can increase student engagement in school.

But there is a trade-off.

If some students remain immune to criticism while others are pushed to sharpen their arguments, the result may be unequal opportunities for improvement. Praise can be encouraging, but it’s not a substitute for some form of direct feedback that helps students grow as writers. Tanya Baker, executive director of the National Writing Project, a nonprofit organization, recently heard a presentation on the study and said she is concerned that Black and Hispanic students may not be “pushed to read” to write better.

That raises a difficult question for schools as they adopt AI tools: When does useful personalization cross the line into dangerous theory?

Of course, teachers are unlikely to openly tell AI systems a student’s race or background the way the researchers did in this experiment. But that doesn’t solve the problem, say the Stanford researchers. Many educational websites and learning platforms already collect detailed information about students, from previous achievements to language status. As AI becomes embedded in these systems, it may have access to much more context than a teacher could provide. And even without clear labels, AI can sometimes use proprietary features by writing them itself.

The main problem is that AI systems are not neutral learners. Even the most common response – where the researchers did not describe the personal characteristics of the student – takes a certain way of writing instructions. Tan described it as discouragement and focused on making amends. “Perhaps the thing we are taking is that we should not give up teaching lessons in the big language model,” said Tan. “People should be in control.”

Tan recommends that teachers review written feedback before passing it on to students. But one of the selling points of AI feedback is that it can emerge quickly. If the teacher needs to review it first, that slows it down and impairs its effectiveness.

AI also enables personalization. The danger is, without careful attention, that personalization may decrease the level for some students while increasing it for others.

This story is about AI bias was produced by The Hechinger reporta non-profit, independent media organization covering education. Sign up Evidence Points and so on Hechinger newsletters.