Catching Production Bugs Before They Happen

Manufacturing bugs are expensive—but the real cost isn’t just fixing them. Lost revenue, damaged trust, and engineering time spent fighting fires instead of building. According to IBM’s Cost of a Data Breach Report (2023), problems caught in production can be expensive up to 15x more rather than those identified during development.

An uncomfortable truth? Normal debugging works. You see bugs after they happen. By then, the damage has already been done.

This is where it is ADLC– the The AI-driven software development life cycle– a complete overhaul of the error. Instead of reacting to failure, i The AI software development life cycle predicts, detects, and prevents them before they reach production.

Here’s how AI debugging in ADLC transforms teams from proactive problem solving to proactive quality engineering.

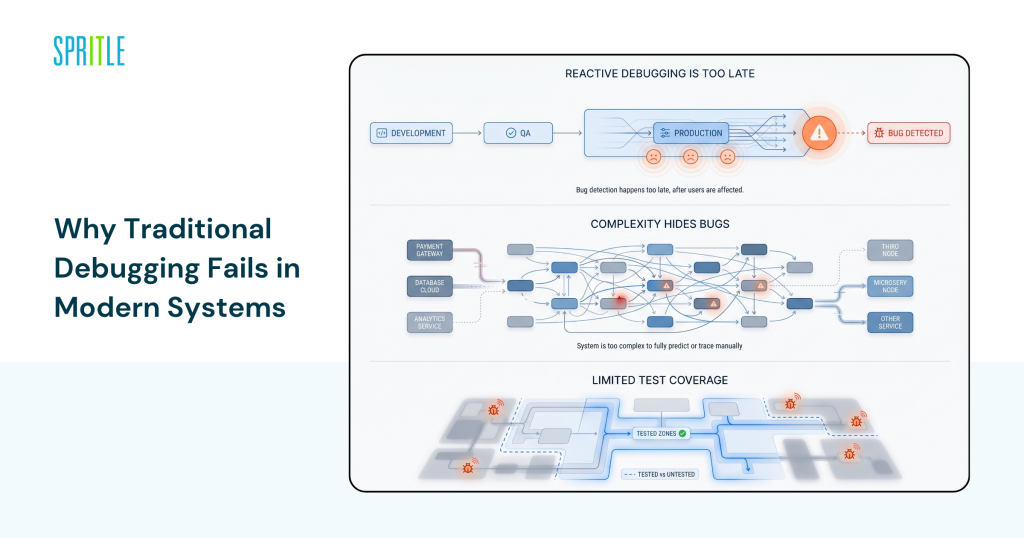

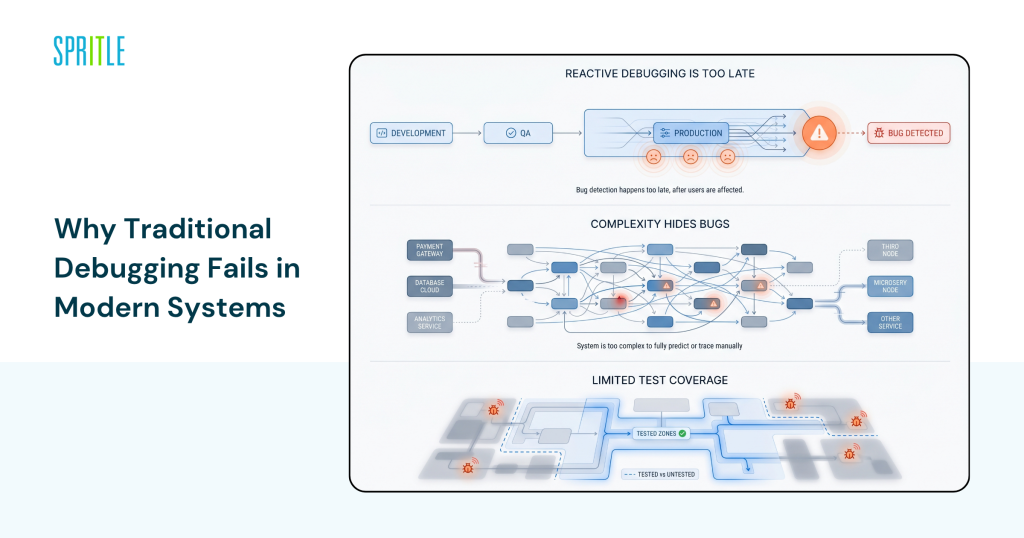

Why Traditional Maintenance Fails in Today’s Systems

Modern systems are no longer simple. Microservices, distributed architecture, and continuous deployment have made debugging more difficult.

Active debugging is too late

In a traditional SDLC:

- Bugs are identified during QA or after deployment

- Debugging relies on logs, monitoring, and manual tracing

- Fixes often require hot patches or rollbacks

By the time a bug is discovered, users may already be affected.

Google SRE reports (2022) show that more than 70% of serious incidents arise from rare circumstances during development.

Complex Hides Bugs

Today’s applications include:

- Tens (or hundreds) of microservices

- Third party APIs

- Asynchronous workflow

This complexity makes it nearly impossible to predict all failure scenarios manually.

Limited Testing

Even with strict QA:

- Test cases include expected scenarios

- Edge cases and unusual situations are often missed

Custom debugging is up to you think it might go wrong—not what actually love it.

What AI Debugging in ADLC Really Means

AI corrects the error ADLC bypasses log analysis or automated testing. Introduce predictive intelligence into the debugging process.

Predictive Error Detection

AI models analyze:

- Code patterns

- Historical bug data

- Runtime behavior

They can flag potential problems before the code is issued.

Tools like DeepCode (Snyk Code) and GitHub Advanced Security use machine learning to find vulnerabilities and logic errors during development.

Intelligent Test Generation

Instead of writing test cases by hand, AI:

- Generates dynamic test cases

- Identifies edge cases based on system behavior

- Regularly updates the test

This is an important skill in modern times AI lifecycle management tools.

Anomalous Detection in Real Time

AI Guardians:

- Application logs

- Performance metrics

- User behavior

It detects anomalies that indicate potential bugs—even if they aren’t causing a failure yet.

According to Gartner (2024), AI-powered visualization tools can reduce incident detection time by up to 60%.

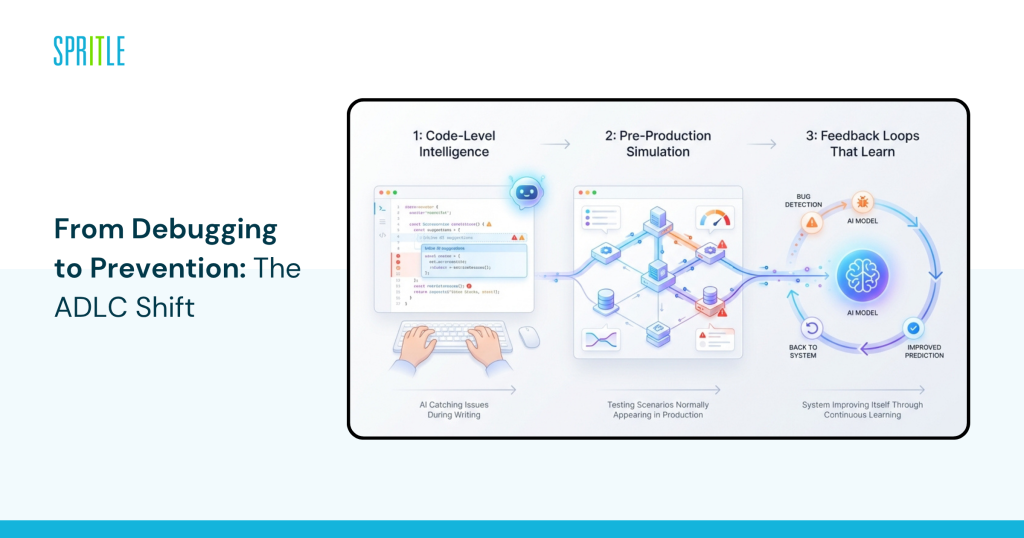

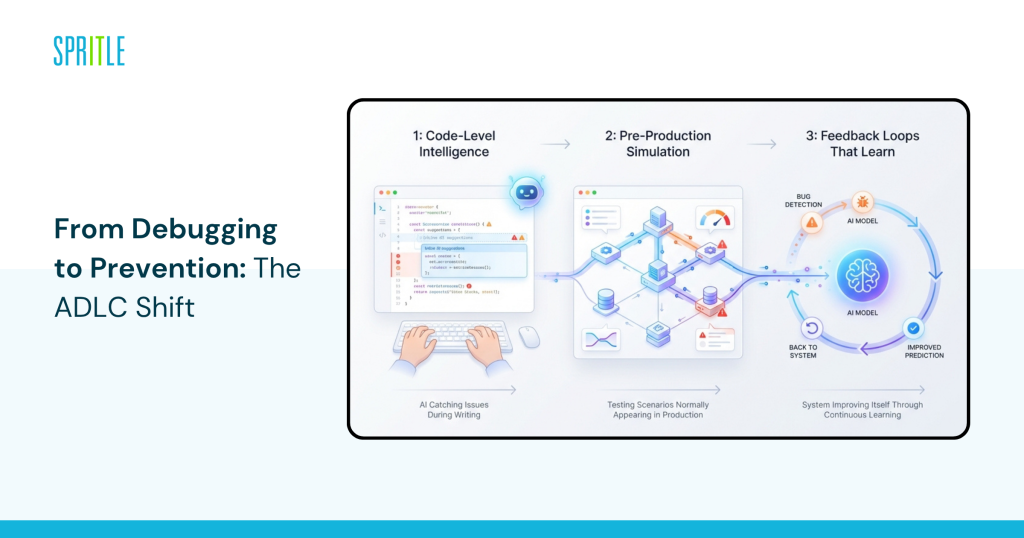

From Error Correction to Prevention: The ADLC Shift

This is where it gets interesting.

Of The AI-driven software development life cycleerror correction is no longer a phase—it is an ongoing skill embedded throughout the life cycle.

Code-Level Intelligence

AI tools analyze code as it is written:

- Suggest real-time fixes

- Highlight dangerous patterns

- Prevent bugs from entering the codebase

GitHub Copilot and Amazon CodeWhisperer already enable this at scale.

Pre-Production Casting

AI can simulate:

- User traffic patterns

- System load conditions

- Failure conditions

This helps teams identify bugs that may only appear in production environments.

Learned Feedback Loops

Found all error:

- It feeds back to the AI models

- It develops future predictions

Over time, the system becomes more accurate in identifying risk areas.

Forrester (2023) notes that organizations using AI debug report 30–50% fewer production incidents.

Real-World Examples of AI Debugging in Action

1. Chaos Engineering for Netflix + AI Insights

Netflix uses chaos engineering tools like Chaos Monkey combined with advanced analytics.

Result:

- It simulates failure continuously

- It identifies vulnerabilities before users are affected

- Reduce downtime significantly

This is closely related to ADLC’s predictive error correction method.

2. Meta’s Static Analysis at Scale

Meta (Facebook) uses AI-driven analysis tools to scan millions of lines of code.

Result:

- Detects bugs before implementation

- Reduce manual code reviews

- It improves the overall code quality

Their systems catch thousands of potential problems every day.

3. Automated Auditing and Monitoring by Amazon

Amazon integrates AI into:

- Test pipes

- Monitoring systems

Result:

- Faster bug detection

- Automated causal analysis

- Continuous development of debugging models

This is a strong example of The AI software development life cycle in business areas.

Business Impact: Why CTOs are Prioritizing AI Debugging

This isn’t just a technology development—it’s a business decision.

Reduced Downtime and Loss of Income

Productivity bugs can:

- Suggest services

- Customer impact

- It led to an explosion

AI debugging reduces these risks.

Benefits of Working in Engineering

Groups that spend less time:

- Fixing production problems

- Writing repetitive test cases

And more time to build new features.

Improved Product Reliability

Fixed performance construction:

- Customer trust

- Product reputation

This is especially important for experimental SaaS platforms hire an AI development team strategies.

Challenges You Shouldn’t Ignore

The honest answer is: Debugging AI presents its own difficulties.

False Ideas

AI tools may:

- Flag non-critical issues

- Create a buzz in performance improvement

Teams need to fine-tune models and thresholds.

Compounding Complexity

Using AI debugging requires:

- Integration with CI/CD pipelines

- Alignment with existing tools

This is where most teams struggle outside ADLC consulting services.

Skill Gaps

Developers need to:

- Understand the insights generated by AI

- Interpret predictions effectively

Without proper training, AI tools can be underutilized.

How to incorporate AI debugging into your ADLC

You don’t need a full setup to get started.

Effective Steps to Discovery

- Get started with AI-powered code analysis tools

Integrate tools like Snyk Code or GitHub Advanced Security - Improve your testing strategy with AI

Use AI to generate and optimize test cases - Adopt AI-driven viewing platforms

Tools like Datadog or Dynatrace provide fuzzy detection - Build feedback loops in your pipeline

Ensure bugs return to AI models for continuous improvement - Consider professional support when you rate

Cooperation with ADLC consulting services can speed up adoption

What to Look for in an AI Debugging Strategy

What separates teams that prevent bugs from those that chase them is foresight.

A strong AI debugging strategy includes:

- End-to-end visibility throughout The AI-driven software development life cycle

- Integration between development, testing, and monitoring tools

- Continuous learning models evolve over time

- Alignment with business goals—not just performance metrics

Organizations that get this right don’t just reduce bugs—they redefine quality.

FAQ

Q: How does AI debugging differ from traditional debugging?

A: Conventional debugging works, focusing on fixing problems after they happen. AI debugging in ADLC is proactive, using machine learning to predict, detect, and prevent bugs before they reach the product.

Q: Can AI debugging completely eliminate productivity bugs?

A: No program can guarantee zero bugs. However, AI debugging significantly reduces the likelihood and severity of production problems by identifying risks early.

Q: What tools are commonly used to debug AI?

A: Tools like Snyk Code, GitHub Advanced Security, Datadog, Dynatrace, and Amazon CodeWhisperer are widely used for AI-powered debugging and visualization.

Q: Is AI debugging good for small development teams?

A: Yes. Many AI debugging tools are scalable and scalable, making them accessible to even small teams.

The conclusion

Catching bugs in production is no longer acceptable when the technology exists to prevent them completely. ADLC it changes debugging from an active process to a predictive capability embedded throughout the lifecycle.

I The AI-driven software development life cycle it doesn’t just improve debugging—it changes the way quality is defined. Instead of testing for failure, you design systems that anticipate and avoid them.

If your team still relies on common debugging, bridge the gap between you and the competition using The AI software development life cycle it will only increase it. Teams that invest in AI debugging are now the ones that ship faster, break less, and build higher trust.