How AI Test Automation Fits into ADLC and Why It Replaces Manual QA

Introduction

Manual QA slows down your releases more than your code. Engineering teams across the US are reaching a ceiling where testing cycles can’t keep up with deployment speeds. According to the Global Quality Report 2025, nearly 40% of software delivery delays are directly tied to testing inefficiencies.

Here is the problem. You can’t measure testing the same way you measure development.

This is where it is Automated testing AI within ADLC changes the equation. By embedding the test in The AI-driven software development life cycleteams reduce QA bottlenecks, improve defect detection, and accelerate releases without increasing the cost of QA. Change does not increase. Structure, and it starts with understanding how testing occurs within the ADLC.

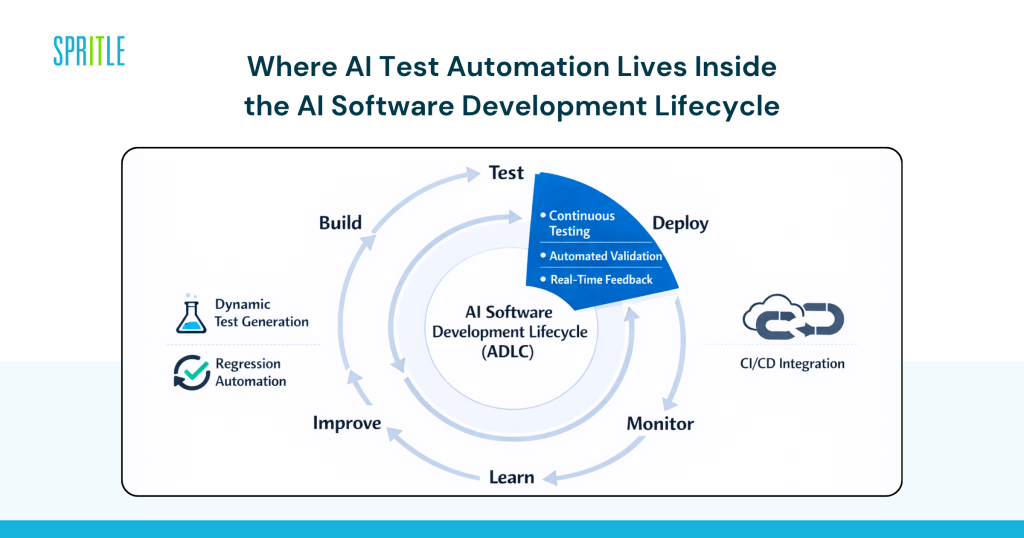

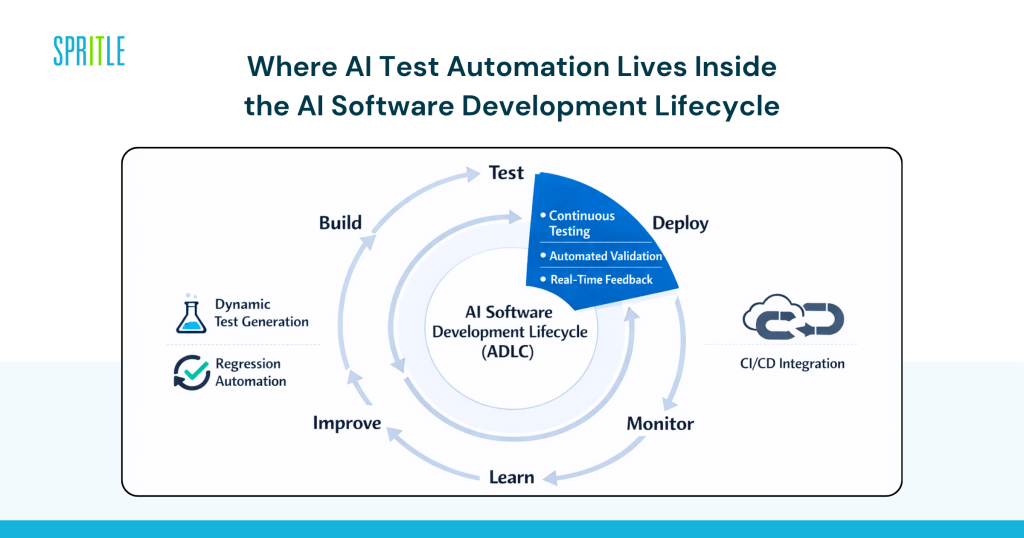

Where AI Test Automation Sits Within the AI Software Development Lifecycle

AI test automation is not a layer added after development. It is an essential part of The AI software development life cycle itself.

AI test automation in ADLC refers to the use of machine learning models and intelligent systems to automatically generate, execute, maintain, and optimize test cases throughout the development lifecycle.

This includes:

- Dynamic test generation based on user behavior

- Autoregressive testing

- Real-time validation on CI/CD pipelines

Unlike traditional QA, testing is no longer a phase. Become an ongoing program embedded in The AI-driven software development life cycle.

Why This Changes Everything

Manual QA relies on documentation, human effort, and static conditions.

AI-driven test scenarios.

It reads in:

- production data

- user flow

- historical errors

That change is what turns testing from a bottleneck into an accelerator.

Why QA Bottlenecks Are Forcing Teams to Rethink Testing

QA costs are increasing, but the biggest problem is speed.

According to Capgemini’s 2025 report, businesses using AI-driven testing can reduce testing effort by up to 30% while improving release frequency.

What most teams miss is this. The QA Manual is designed for predictable systems. Modern applications are unpredictable.

Pressure Points You Already Feel

- Regression cycles take days instead of hours

- QA teams have expanded with multiple releases

- High cost of hiring skilled QA engineers in the US

- Increasing complexity in microservices and APIs

At the same time, AI-native competitors are posting updates every day. That gap is closing fast.

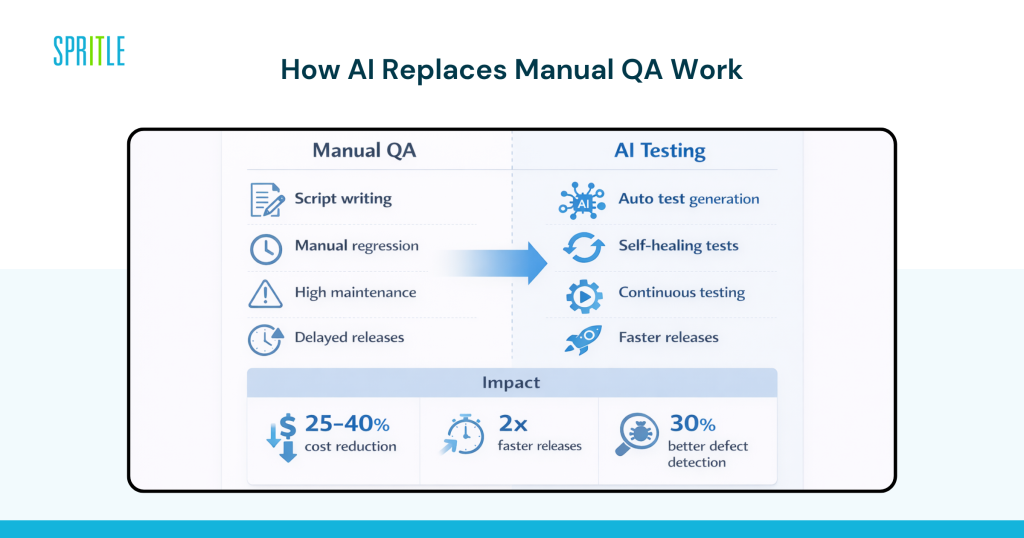

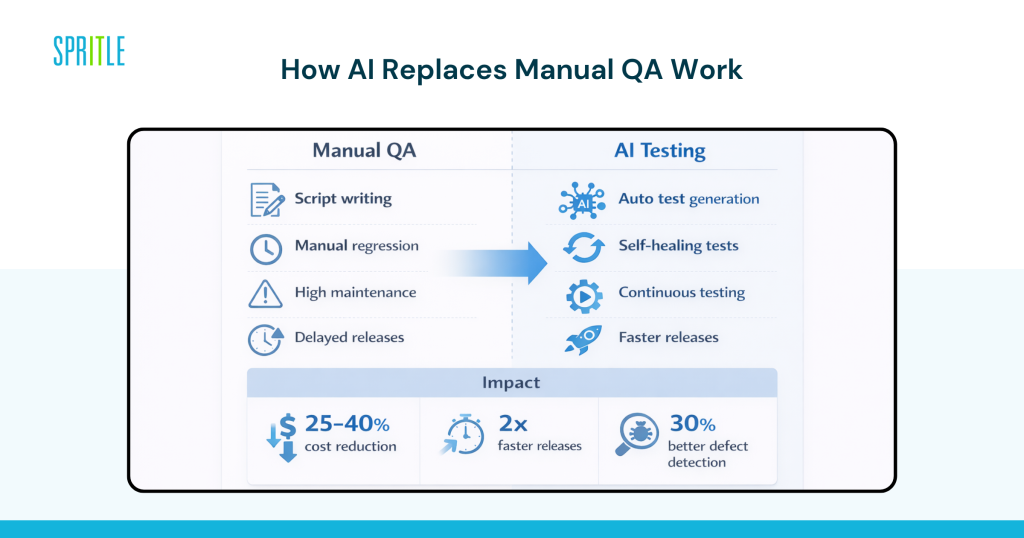

How AI Test Automation Is Replacing Manual QA Work

This is where it comes into play. AI is not completely replacing QA teams. It replaces certain types of work that do not scale.

Creation of Smart Tests Instead of Scripting

Tools like Mabl, Testim, and Functionize generate test cases automatically by analyzing application behavior.

Instead of writing documents, your team defines the purpose.

AI manages:

- Implementation of UI testing

- API authentication conditions

- acute case detection

Gartner estimates that AI-driven test generation can reduce manual typing effort by up to 50%.

Exercises that lower maintenance overhead

One of the biggest hidden costs in QA is test maintenance.

AI tools solve this with self-sustaining capabilities:

- UI changes are detected automatically

- update test scripts without manual intervention

- false positives are minimized

This alone can reduce maintenance time by 30 to 40 percent.

Continuous Testing Within AI-Assisted CI/CD

The test no longer waits for the release cycle.

Within the An AI-assisted CI/CD pipelinethe test proceeds continuously:

- every commitment results in confirmation

- errors are caught earlier

- feedback loops improve future test coverage

This creates a smart testing and QA system that comes with your request.

What This Means for Cost, Speed, and Engineering Efficiency

This is the stage that most CTOs care about. Does this really move the needle?

The short answer is yes, but only when used within ADLC.

Measurable Business Impact

Organizations that receive an automated AI audit report:

- 25 to 40% reduction in QA costs

- 2x faster release cycles

- up to 30% improvement in disability detection rates

Source: World Quality Report 2025

Why ROI Is Real

Not only do you save time.

You are:

- reduce manual effort

- to reduce production errors

- speeding up feedback loops

This is how groups start reduce the cost of software development with AI without compromising quality.

Real-World Examples of AI Testing in Action

Netflix and Continuous Experimentation at Scale

Netflix uses automated and intelligent screening systems as part of its streaming lineup.

Impact:

- thousands of tests performed on each feed

- less QA intervention

- high reliability of the system at scale

Their approach is closely aligned with ADLC principles.

US Fintech company to modernize QA

A New York fintech company has adopted AI testing tools combined with CI/CD.

Results:

- regression testing decreased from 48 hours to less than 8 hours

- disability leakage reduced by 20%

- quick verification of compliance

SaaS Platform in Chicago to Scale Without Hiring QA

The SaaS company has replaced 60% of manual QA workflows with AI-based testing.

Results:

- no additional QA hires despite product growth

- fast sprint cycles

- improved release certainty

Risks You Need to Plan for Ahead of Time

AI test automation is not plug-and-play. There are real dangers if you approach it the wrong way.

Blind Trust in AI Results

Assessments generated by AI still need to be validated. Without guidance, false impressions or missed events can occur.

Bad Data Leads to Bad Tests

AI systems rely on historical and behavioral data. Weak data sets limit efficiency.

Tool Classification for All Groups

Using too many disconnected testing tools creates inefficiency instead of solving it.

Compliance and Security

In regulated industries, AI-generated tests must meet strict validation standards.

The honest answer is this. AI testing works best when it’s part of a structure The AI-driven software development life cyclenot an independent initiative.

What Top Teams Are Doing Differently With AI Testing

This is where the divide occurs between the groups that test AI and those that measure it.

1. They Embed Tests Before, Not After

Testing is ongoing, not the final step.

2. They Set Up Standard Tools and Workflows

Consistency across teams ensures scalability.

3. They Invest in Feedback Loops

All test results go back to improving future tests.

4. They combine AI with Human Supervision

AI holds the scale. People carry judgment.

5. They Collaborate Skillfully When Needed

This is where most teams check ADLC consulting services or work with AI software development company to speed up acquisition.

If you’re trying to build this from scratch, expect a learning curve.

How to Start Using AI Test Automation Without Disrupting Delivery

You don’t need a full transformation on the first day. You need a controlled release.

- Identify high-impact QA obstacles such as regression testing

- Introduce AI testing tools in a limited scope environment

- Integrate with existing CI/CD pipelines gradually

- Define authentication and security governance

- Expand based on measurable improvements

What separates successful teams is not the tool. It’s how they integrate it into ADLC.

If you want to go fast, you work with someone who has experience AI development life cycle partner or provider of business AI development solutions can reduce the path significantly.

Frequently Asked Questions

Q: How does AI test automation fit into the AI-driven software development lifecycle?

A: AI test automation is embedded directly into the AI-driven software development lifecycle as a continuous process. It automates test creation, execution, and optimization while integrating CI/CD pipelines for real-time validation.

Q: Is AI test automation replacing QA engineers completely?

A: No. It replaces repetitive manual tasks like regression testing and script maintenance. QA engineers focus on strategy, edge cases, and validation within the AI software development lifecycle.

Q: What kind of ROI can US companies expect from AI testing?

A: Most organizations see a 25 to 40 percent reduction in QA costs and much faster release cycles. ROI depends on how well AI testing is integrated with ADLC.

Q: Should we build AI testing capabilities in-house or work with partners?

A: If your team lacks knowledge of AI in SDLC, working with an AI software development company or ADLC consulting services provider can accelerate results and reduce implementation risk.

The conclusion

AI test automation doesn’t just improve QA. It redefines how testing works internally The AI-driven software development life cycle. Teams that get it right eliminate bottlenecks, reduce costs, and deliver faster without compromising quality.

The gap between manual QA testing and AI is growing rapidly.

If your team is exploring how to keep testing up-to-date, that’s fine ADLC services or Lifecycle services driven by AI it can help you move from experimentation to real impact.