Open Sourcing Our Real-Time PPE Mobile Application

By Spritle Software Engineering Team

Safety at work is non-negotiable. But manual security compliance monitoring is slow, inconsistent, and inconsistent. We’ve built a real-time Personal Protective Equipment (PPE) detection app that runs entirely on your smartphone — no cloud, no expensive hardware, no latency.

The Problem We Solve

Every year, thousands of workplace accidents occur because workers are not wearing the proper safety gear. Construction sites, production floors, and industrial facilities require immediate response – not end-of-day inspection reports.

Traditional solutions require:

- Fixed CCTV cameras have expensive cloud processing

- Internet connectivity at remote work sites

- Dedicated hardware or servers

- Minutes of delay before warning fire

We wanted to prove that Sophisticated computer vision can work perfectly on an Android phoneto provide immediate response when a security breach occurs.

Why We’re Open to Find This

When we started this project, we did what all developers do – we looked for open source implementations that already existed.

We found many PPE projects on GitHub. YOLO models, Python scripts, Roboflow datasets, Flask dashboards. The computer vision side was well covered. But when we look at a a complete, ready-to-run Android app– something you can match, you build, and point a phone camera at an employee – it just wasn’t there.

All the projects we found had the same problem. Built for Raspberry Pi. It runs on a laptop with OpenCV. It needs a server in the background. It depends on the cloud APIs. The demos are great, but none of them work offline on someone’s pocket phone.

No one had taken the PPE model all the way to a native Android app with real-time vision on the device. The last mile was always missing.

So we built it ourselves — we trained the model, exported it to TFLite, wired it to the CameraX pipeline, and ran it at 30 FPS on a mid-range Android phone that didn’t need the Internet.

And since we couldn’t find it when we needed it, we put it there so the next group doesn’t have to go through the same search.

What You Get

The app detects 5 key PPE classes in real time:

✅ helmet – The worker is wearing a hard hat

⚠️ no-helmet — the worker is NOT wearing a hard hat ← very serious

✅ a vest – The worker is wearing a safety vest

⚠️ no vest – the worker is NOT wearing a safety vest

👤 a person – any private person

The no-helmet and no-vest sections are the most important – they trigger a safety alert.

Full Tech Stack

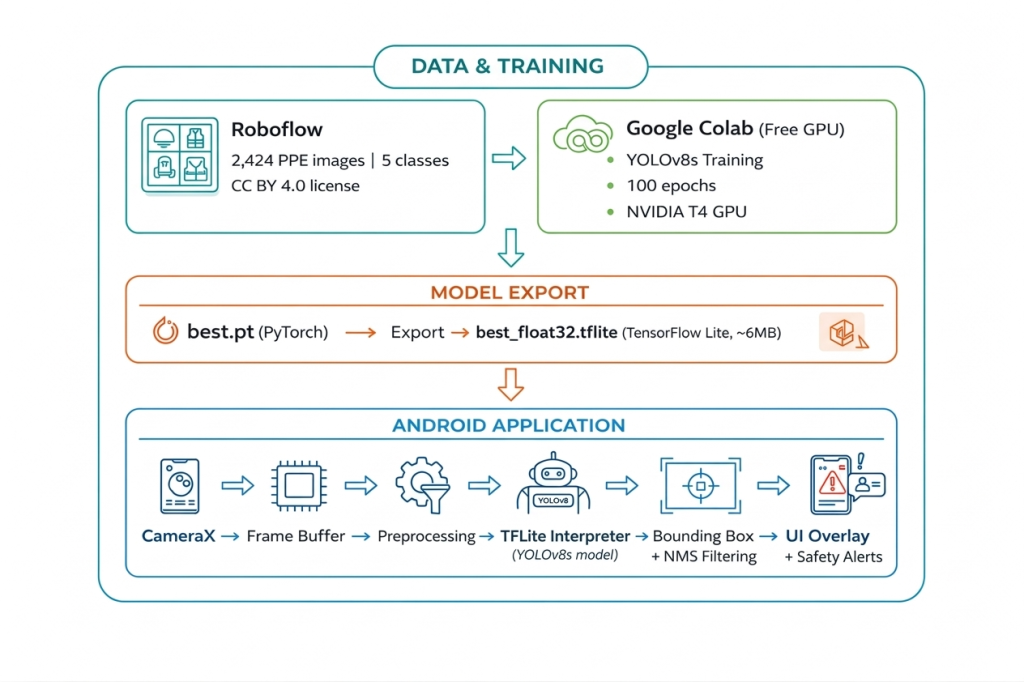

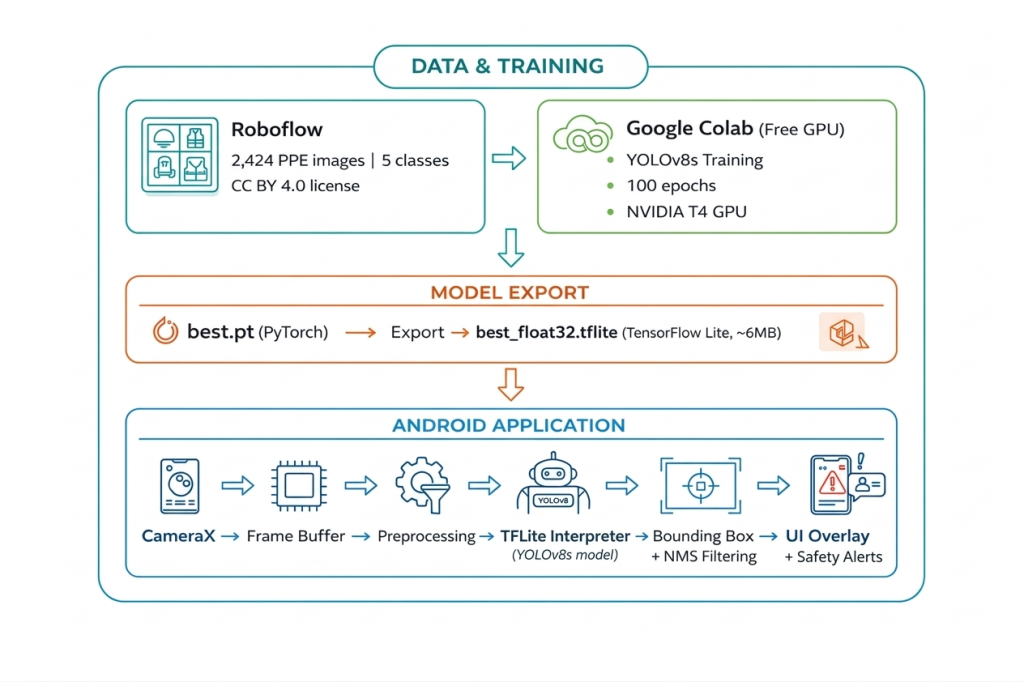

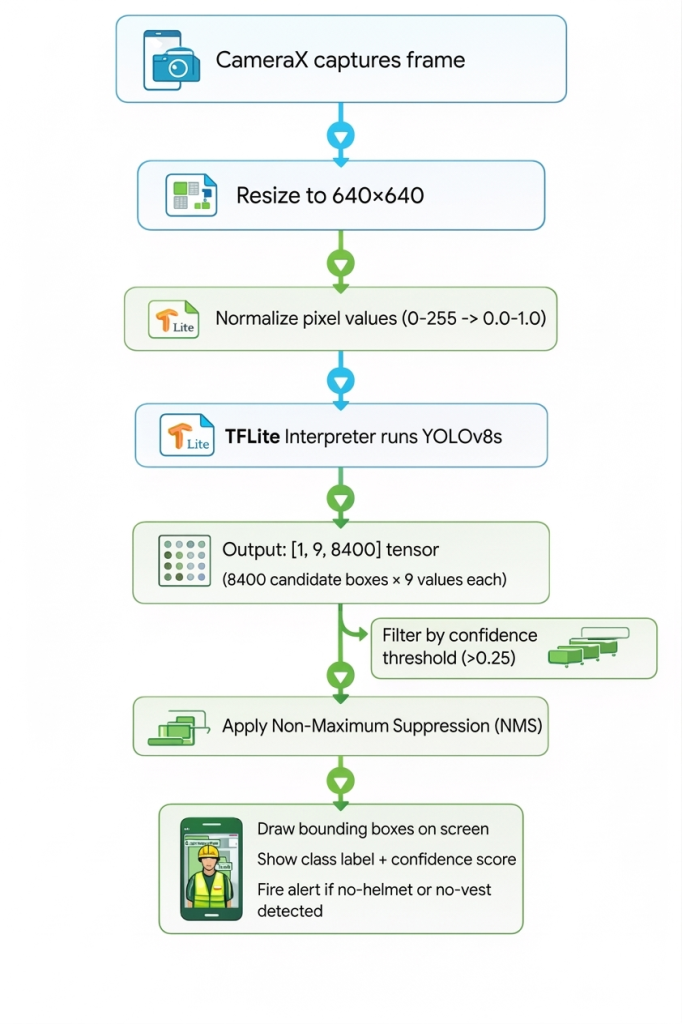

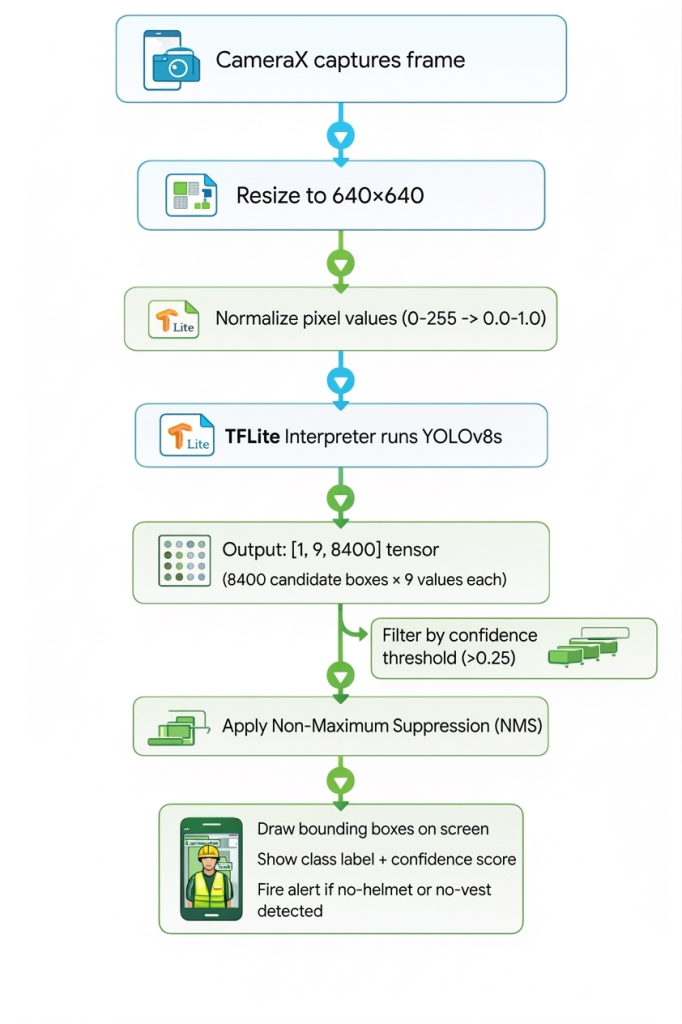

How It All Fits Together

Deep Dive: Each part

1. Dataset — Roboflow Design Security

We used the construction-safety-gsnvb dataset from Roboflow-100 – a curated, open source collection of real construction site images.

Data set: build-security-gsnvb (Roboflow-100)

Photos: 2,424 photos of real construction sites

Separate: Train / Verification / Inspection

Classes: helmet, no-helmet, vest, no-vest, person

License: CC BY 4.0 (free for commercial use)

Why this dataset? Because it features real-world background images with workers in different lighting conditions, angles, and distances — not studio shots.

2. Model – YOLOv8s

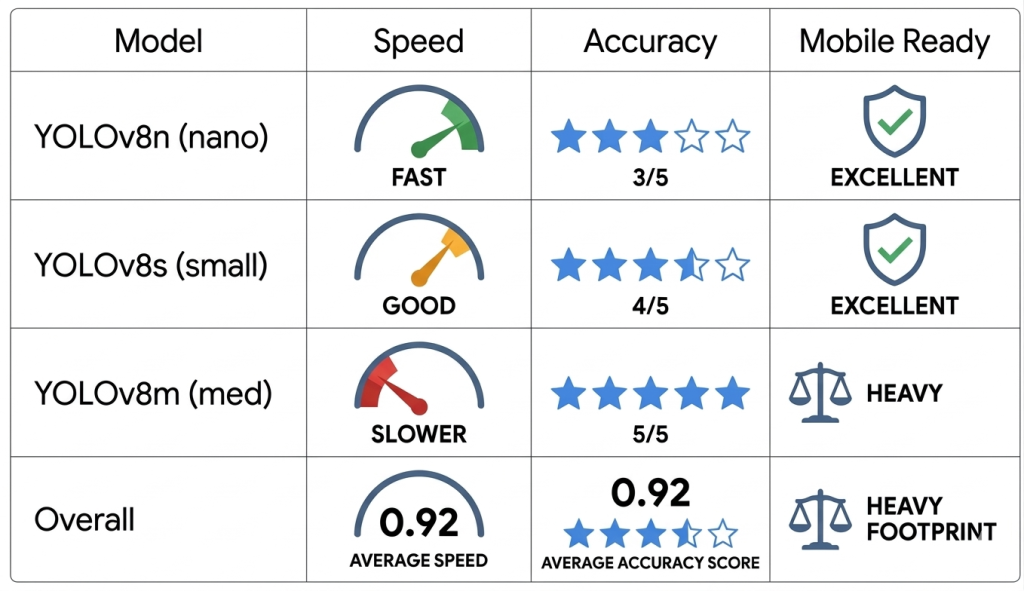

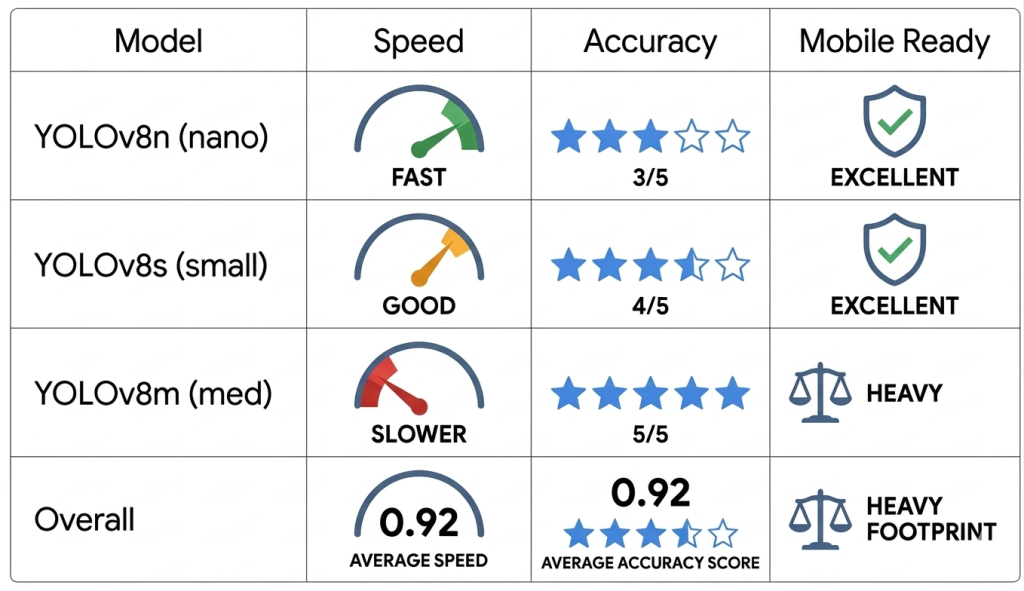

We chose YOLOv8s (small exception) from Ultralytics after checking three options:

We went with yolov8s — better accuracy than nano, still fast enough for real-time mobile detection.

Training configuration:

//code

from ultralytics import YOLO

model = YOLO('yolov8s.pt') # pretrained on COCO (transfer learning)

results = model.train(

data="data.yaml",

epochs=100,

imgsz=640,

device=0, # GPU

batch=16,

mosaic=1.0, # augmentation

mixup=0.15,

fliplr=0.5,

degrees=10.0,

)

Why transfer learning? Instead of training from scratch, we started from yolov8s.pt – a model already trained in 80 COCO classes. This means that the model already understands shapes, edges, and human bodies. We just fine-tuned it to see PPE directly. This training time has been cut from days to less than 30 minutes on the free Colab GPU.

3. Model Training — Google Colab (Free)

The entire training pipeline continued Free T4 GPU for Google Colab – no premium computer required.

Training area:

Platform: Google Colab (free tier)

GPU: NVIDIA T4 (15GB VRAM)

Working time: 25-30 minutes for 100 epochs

Outline: Ultralytics 8.4.33

Python: 3.12

Continuity of training (loss decreases = model learning):

Epoch 1/100 → loss: 3.17 mAP50: 0.14

Epoch 10/100 → loss: 1.93 mAP50: 0.36

Epoch 50/100 → loss: 1.12 mAP50: 0.71

Epoch 100/100 → loss: 0.87 mAP50: 0.92 ✅

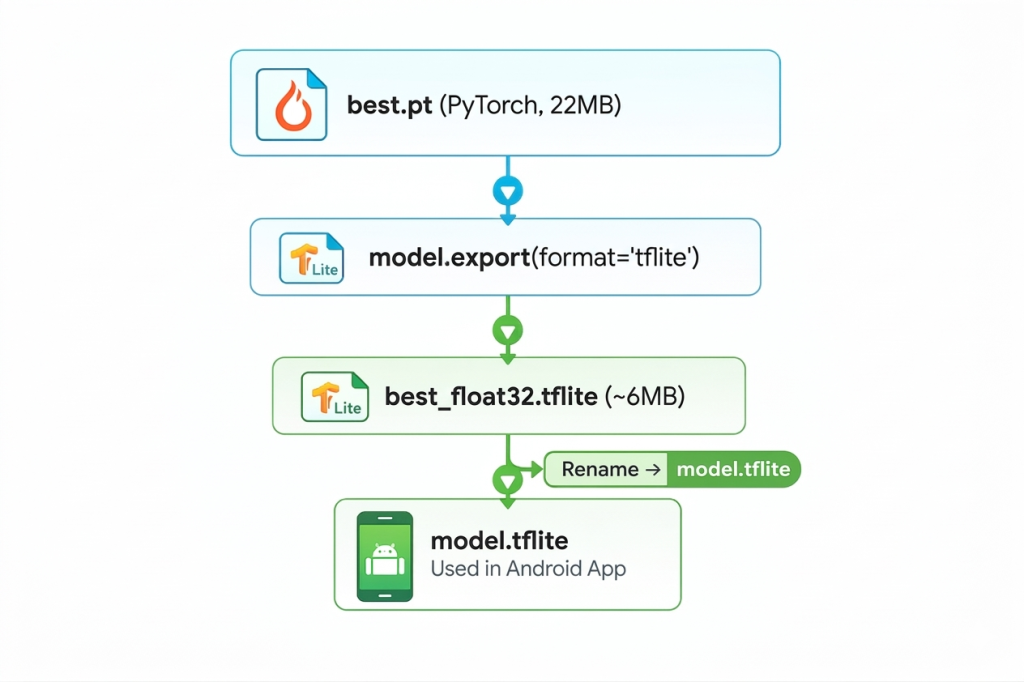

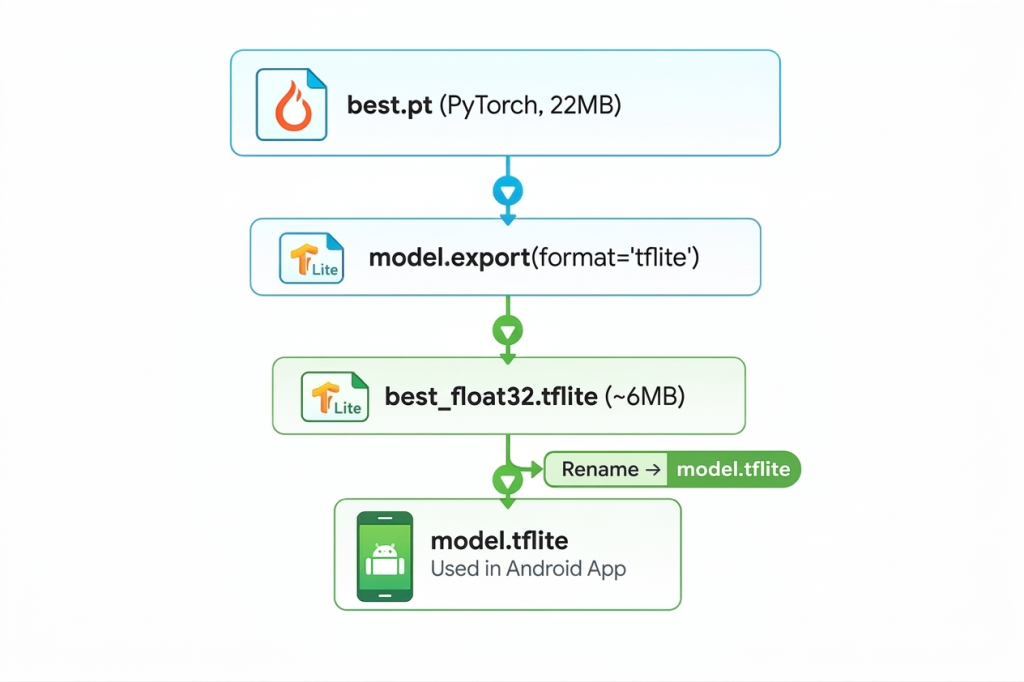

4. Export Pipeline — PyTorch to TFLite

After training, the model exists as a .pt (PyTorch) file. Android can’t implement that directly. We export to TensorFlow Liteformat:

from ultralytics import YOLO

model = YOLO('runs/detect/train/weights/best.pt')

model.export(format="tflite")

# Output: runs/detect/train/weights/best_saved_model/best_float32.tflite

TFLite has given us:

- ~ 6MB model instead of PyTorch’s 22MB weights

- It works perfectly on the device – no internet required

- Hardware acceleration via GPU delegate on compatible phones

- 40-60ms indicator for mid-range Android devices

5. Android App Architecture

The Android app is written with Kotlinusing CameraX access to the camera and TFLite Interpreter to use the model.

android_app/

├── app/

│ └── src/main/

│ ├── assets/

│ │ ├── model.tflite ← your trained PPE model

│ │ └── labels.txt ← class names

│ └── java/com/ppeapp/yolov8tflite/

│ ├── MainActivity.kt ← camera + UI

│ ├── Detector.kt ← TFLite inference

│ ├── BoundingBox.kt ← box data class

│ └── Constants.kt ← model/label paths

Inference loop:

Why CameraX over Camera2?

Camera2:

It is powerful but complex. 1000+ lines of boilerplate.

Device-specific bugs. Manual lifecycle management.

CameraX:

Lifecycle-aware. It works the same way on all Android devices.

Built-in frame analysis. 30+ FPS out of the box.

We chose CameraX. Zero device-specific camera bugs after launch.

6. Labels.txt File

This small file puts class IDs into human-readable names. The order must be exactly the same as your training data.yaml:

helmet

no-helmet

no vest

a person

a vest

If this order is incorrect, the model will show the correct box but the incorrect label — such as drawing a box around the helmet and calling it “person.”

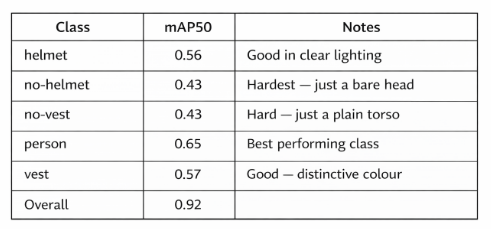

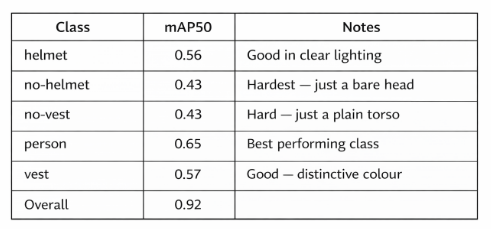

Model Performance

Frame rate: 40-60ms per frame (average Android)

FPS: 15-30 FPS depending on the device

Model size: ~ 6MB

Minimum Android: API 24 (Android 7.0+)

Why is no-helmet accuracy low? Because “no hat” is just a human head – it looks like any other person. The model should learn the absence of the hard hat rather than its presence. This is a much more difficult computer vision problem than finding a distinctive yellow helmet.

What we have learned

1. Small data sets have accurate ceilings. With 2,424 images, we reach a ceiling of about mAP50 ~0.92 overall. Each class scores a no-helmet and no-vest plateau. More data is the only real fix.

2. Transferring learning is a powerful force. Starting with pre-trained COCO weights cuts our training time from hours to 25 minutes. The model already knew what people looked like – we just taught it what PPE looked like.

3. TFLite deployment is straightforward. One line of code converts a YOLOv8 model to TFLite. Ultralytics handles all graph development and format conversion.

4. CameraX is ready for production. After moving from Camera2, we had some device-specific bugs. We highly recommend it for any Android camera work.

5. The device hits the cloud safely. Zero latency. Works in basements, tunnels, remote sites with no signal. Maintaining privacy — videos never leave the device.

Getting started

Run the App

# Clone the repo

git clone

# Add your model files to:

# android_app/android_app/app/src/main/assets/

# ├── model.tflite

# └── labels.txt

# Open android_app/android_app in Android Studio

# Connect Android phone (USB debugging on)

# Click Run ▶

Train Your Own Model

# Step 1 — Install

pip install ultralytics roboflow

# Step 2 — Download PPE dataset

from roboflow import Roboflow

rf = Roboflow(api_key="YOUR_KEY")

project = rf.workspace("roboflow-100").project("construction-safety-gsnvb")

dataset = project.version(1).download("yolov8")

# Step 3 — Train

from ultralytics import YOLO

model = YOLO('yolov8s.pt')

model.train(data="data.yaml", epochs=100, imgsz=640, device=0)

# Step 4 — Export to TFLite

model = YOLO('runs/detect/train/weights/best.pt')

model.export(format="tflite")

License & Credits

Released from below MIT License – use it commercially, convert, ship.

It was developed by a team of engineers at Spritle software.

Thanks to the open source communities behind:

Archive: github.com/spritle-software/ppe-detection-app

Let’s make workplaces safer, one frame at a time. 🦺