Real-Time AI Training Data: The Missing Layer

A familiar pattern has emerged in robotics and autonomous systems: the first demo works well on stage, the same system stumbles in a live warehouse two weeks later, and the post-mortem test blames the “reality” for being dirtier than the test environment. Some voices in the field argue that the missing layer is hardware – better grips, torque sensors, tactile skins. That argument is correct, but not perfect. Even the best sensitive hardware produces raw signal streams that the model must learn translation. The real bottleneck under most of Physical AI’s failures is not the sensor. It is multimodal Virtual AI training data what teaches the models what those signs mean, how they relate to vision, and what actions to take when the world recedes. That data isn’t available on an industrial scale – and that’s a missing layer.

That’s the “Missing Layer” in Physical AI Actually

The typical Physical AI loop – sense, decide, act, adapt – is discussed as if it were a hardware and architecture problem. Basically, each arrow in this loop is a learned behavior. The feeling implies a model that transforms noisy, high-dimensional sensors into probabilistic state estimates. Decide means a policy that has seen enough diversity to be generalizable. Action means learned control versus real power. Convert it means noticing, in milliseconds, that a grip is slipping or a part is misaligned – and correcting the movement in the middle. None of these behaviors can be programmed to exist. They are learned from examples. If a Physical AI system cannot adapt during communication, the most common root cause is that its training data did not include enough labeled examples of communication to learn from. The hardware can broadcast the correct signals. The model still needs a dataset that makes those signals meaningful.

Why vision-only datasets break Physical AI

Consider a medium-sized fulfillment operator that supplies a joint picker to three distribution centers. The designer’s perception model was trained on millions of product images. It identifies things quickly. First week of live deployment, performance looks good. The third week, the exit falls on the third. The things the selector struggles with are not difficult to do see. They are difficult the handle: half-milled boxes that deform on contact, shrink wrap bundles that slide, and translucent plastic shells that confuse depth measurement when combined with overhead lights. The perception data told the model what things looked like. There is nothing in the training program that tells them how they feel, how they react to pressure, or when the grip is about to fail.

This is a structural gap in many Physical AI stacks – and it appears in datasets before it appears on the floor of the industry.

Peer-reviewed manipulation benchmarks have shown that adding tactile data to visual-only training lines can increase the manipulation success rate by up to 20 percent, with a significant lift from combined visual training (Source: IEEE/RSJ IROS benchmark results, 2024). The difference doesn’t add up. The line between demo and implementation.

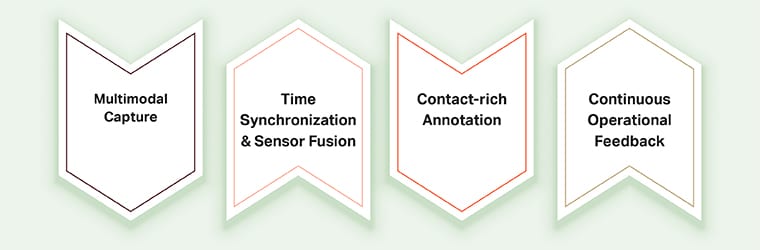

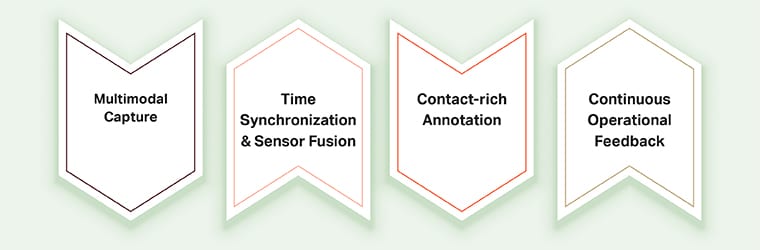

Four Layers of Real-Time AI Training Datasets

Building a dataset that teaches a model to do things in the world takes four tightly connected layers. Skip any of them and the stack above it collapses.

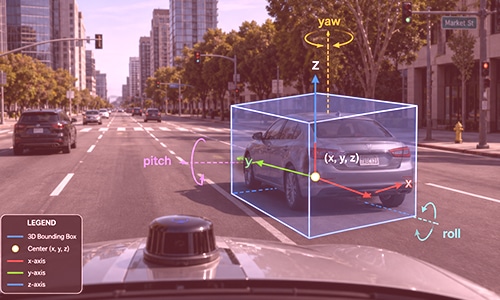

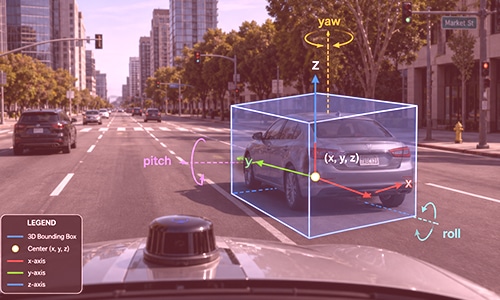

- Multimodal Imaging: The dataset should contain what the robot will actually experience: synchronized RGB and depth video, LiDAR or stereo where appropriate, tactile signals (pressure distribution, vibration, slip), force and torque readings at the contact point, proprietary data about the gripper’s position, and often noise. The imaging rig is as important as the sensors – placement, balance, and the ability to access the edge cases are critical. The teams building this in-house often pair in-house aircraft with a specialized AI data collection partner to access the variety, location, and scope of the situation with robust dataset requirements.

- Time synchronization and sensory integration: A tactile spike at 1,500 Hz is meaningless without knowing that the visual and sensory streams were being displayed in the same millisecond. The temporal alignment of all methods is what allows the model to learn, for example, that a certain visual indicator predicts a sliding event 40 milliseconds before the affected pressure drops. Without synchronization, you have a parallel stream rather than training data.

- Rich communication annotation: This is the most difficult layer and most programs are invisible. Annotations need to label grip quality, sliding times, contact initiation and release, position of the object within the gripper, deformation under force, and temporal parameters of small actions. Getting this right requires trained annotation teams, multi-stage reviews, and consistent guidelines across methods – which is why many critical tasks rely on structured data annotation workflows rather than trying to measure them automatically.

- Continuous performance feedback: Once the Physical AI system is implemented, all successful choices, misses, and failures become new data. Teams close the loop – capture, label, retrain, redistribute – see the combined benefits. Groups that don’t watch their models move silently as the world changes around them.

Why Physical Annotation for AI is a Distinct Discipline

Annotating Physical AI training data is not about labeling images with additional steps. It is a different discipline. Think of it as training an apprentice chef versus showing them cooking videos. Video teaches recognition – that’s a julienne cut, this is a snap. Apprenticeships teach how a sharp knife feels when dealing with a tough onion, when a pan is hot enough without checking a thermometer, and how to adjust the grip when the handle slips. The second type of learning requires someone close to the learner, to label the lived experience moment by moment. Physical AI annotation works in a similar way: annotations don’t just mark what’s visible; they label contact events, force profiles, smooth onsets, and temporal boundaries of actions across synchronized sensory streams. It requires background-aware annotations, rigorous QC, and specialized tools. Done right, it turns raw multimodal capture into a kind of robot training data that teaches the model to handle touch. Done poorly, it produces a shrill sound.

Conclusion – Hardware Ends the Loop; Data Begins It

Better grips, tactile skins, and power sensors are real progress. None of them obviate the need for multiple, synchronized, richly annotated modem datasets that teach the model what those signals mean in context. Organizations bridging the gap between Physical AI demos and Physical AI deployments are those that treat data as a first-class infrastructure – collecting it intentionally, annotating it with domain rigor, and providing performance data back to training as a permanent loop. Computer hardware completes the sensor-decide-act-adapt loop. The training data is the starting point.