Solving the “Whac-a-mole problem”: A smart way to remove AI vision models | MIT News

In today’s hospitals and clinics, a dermatologist may use an artificial intelligence model to classify lesions on the skin to assess whether the lesion is at risk of becoming cancerous or malignant. But if the model is biased against a particular skin, it may fail to identify a patient at high risk.

Perhaps one of the most well-known and persistent challenges AI research continues to face is bias. Bias is often discussed in relation to training data, but model architecture can contain and amplify bias, negatively impacting model performance in real-world settings. In high-level medical settings, the real consequences of malpractice have made bias a very important safety issue.

A new paper from researchers at MIT, Worcester Polytechnic Institute, and Google accepted at the 2026 International Conference on Learning Representation proposes a novel debiasing method called “Weighted Rotational DebiasING” (ie, WRING) that can be applied to visual language models (VLMs), such as OpenAI’s OpenCLIP.

VLMs are multi-modal models that can understand and interpret various data formats such as video, image, and text simultaneously. Although rough methods of VLMs exist, the most commonly used method is known as “guessing,” leading to what has been called the “Whac-A-Mole dilemma”, a powerful observation that will be formally introduced to AI research in 2023.

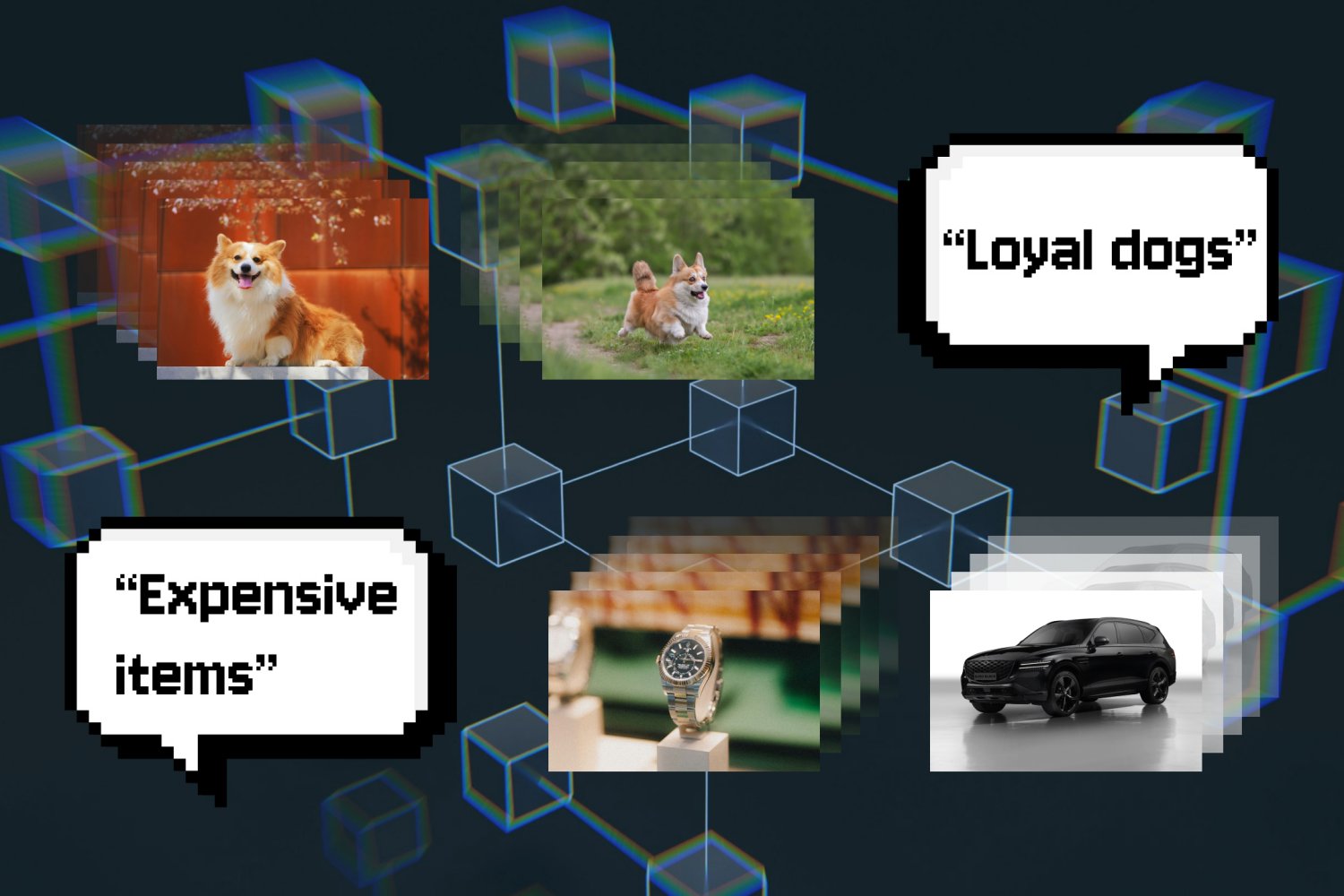

Projection debiasing is a post-processing technique that removes unwanted, biased information from the embedding model by “projecting” the underlying surface out of the relational representation, thus eliminating the bias. But this method has its problems.

“When you do that, you inadvertently hit everything,” said Walter Gerych, first author of the paper, who conducted the study last year as a postdoc at MIT. “Every other relationship that the model learns changes when you do that.”

Gerych, now an assistant professor of computer science at Worcester Polytechnic Institute, was joined on the paper by MIT graduate students Cassandra Parent and Quinn Perian; Rafiya Javed from Google; and MIT associate professors of electrical engineering Justin Solomon and Marzyeh Ghassemi, who is an affiliate of the Abdul Latif Jameel Clinic for Machine Learning and Health and the Laboratory for Information and Decision Systems.

While projection exclusion prevents the model from working with biases expressed outside the subspace, it can end up amplifying and creating other biases, hence the Whac-A-Mole problem. According to Ghassemi, the unintended amplification of the biases of the models “is a technical and practical challenge. For example, when you defy the VLM that retrieves images of clinical staff – if the racial bias is removed – it can have the unintended effect of amplifying the gender bias.”

WRING works by moving certain links within the high-dimensional space of the model – those that seem to be responsible for the bias – to a different angle, so the model will no longer be able to distinguish between different groups within a certain concept. This changes the representation in a particular space while leaving other model relationships intact. And in defiance of assumptions, WRING is a post-processing technique, meaning it can be applied “on the fly” to a pre-trained VLM.

“People have already spent a lot of resources, a lot of money, training these big breeds, and we really don’t want to go in and fix something during the training because you have to start from scratch,” explained Gerych. “[WRING is] very efficient. It does not require additional training of the model and is less invasive. “

In their results, the researchers found that WRING significantly reduced bias in the target mind without increasing bias in other areas. But currently, this approach is limited to Contrastive Language-Image Pre-training (CLIP) models, a type of VLM that links images to language for search or classification.

“Extending this with ChatGPT-style, generative language models is a logical next step for us,” Gerych said.

This work was supported, in part, by a National Science Foundation CAREER Award, an AI2050 Award Early Career Fellowship, a Sloan Research Fellow Award, a Gordon and Betty Moore Foundation Award, and an MIT-Google Computing Innovation Award.