xAI Introduces grok-voice-think-fast-1.0: Bench-high τ-voice at 67.3%, High-Performance Gemini, Real-Time GPT, and More

Building an AI agent for voice production is one of the most difficult engineering challenges in machine learning in use today. It’s not just about the accuracy of the transcription. You need a system that can capture the context of an entire five-minute conversation, request external APIs during the call without pausing, recover properly when the caller corrects themselves, and do all this reliably when the audio is reduced by background noise, a difficult voice, or a dropped word. Most current systems handle one or two of those needs. The newly released xAI grok-voice-think-fast-1.0 makes a serious claim to handle them all – and the benchmark numbers back it up.

Available through the xAI API, grok-voice-think-fast-1.0 is a new xAI voice model. It is purpose-built for complex, intuitive, multi-step workflows across customer support, sales, and business applications, and is already deployed at scale powering Starlink’s live phone functionality.

What makes a Voice Agent Full-Duplex?

Before extracting the benchmark results, it is worth understanding what kind of model it is grok-voice-think-fast-1.0 is something. It is tested on the (Tau) τ-voice bench as full duplex voice agent. The system processes incoming speech and generates responses all at once, rather than waiting for the speaker to pause before starting to think. This is how people communicate in real conversations. This is also why handling interruptions is a really difficult technical problem: the model has to decide in real time whether a mid-sentence utterance is a correction, clarification, or just a filler word, and adjust its behavior accordingly.

The τ-voice bench evaluates agents mainly under these realistic conditions: noise, expressions, distractions, and taking advantage of nature, making it a more appropriate measure of production performance than ASR measurements of conventional pure noise.

Numbers: Important Leads

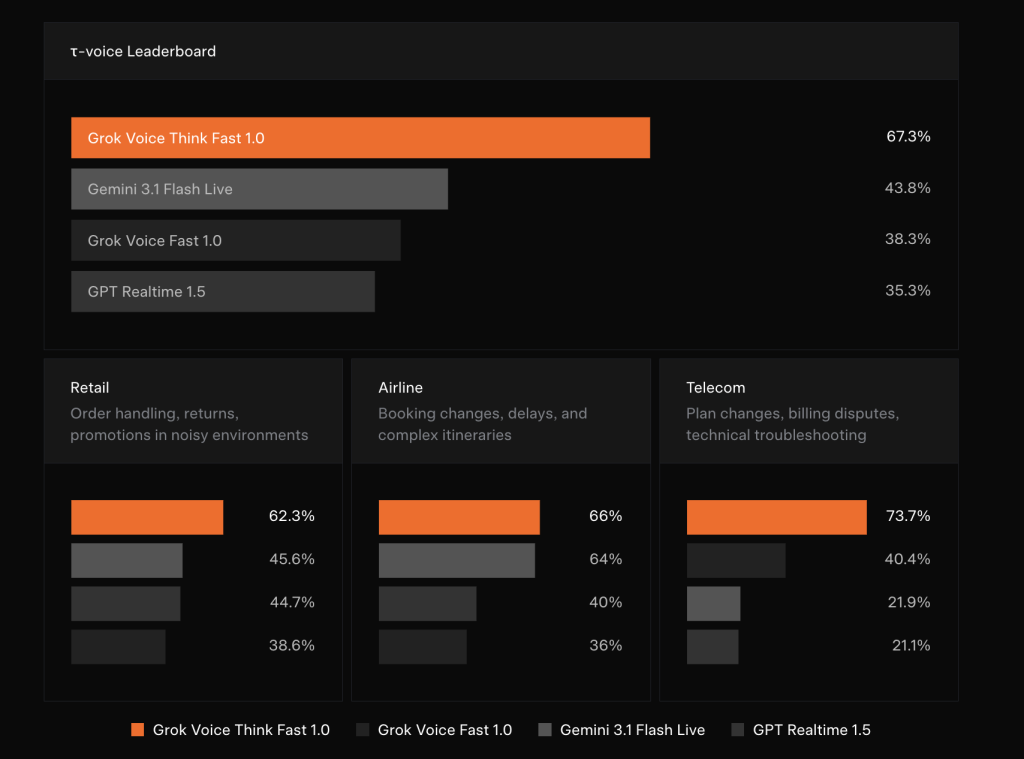

The published benchmark xAI results are amazing at how big the gaps are. On the τ-voice leaderboard, grok-voice-think-fast-1.0 points 67.3%compared to 43.8% for Gemini 3.1 Flash Live, 38.3% for Grok Voice Fast 1.0 (previous xAI model), and 35.3% for GPT Realtime 1.5.

Breaking that down vertically tells an even clearer story:

In Selling – including order management, returns, and promotions in noisy environments – grok-voice-think-fast-1.0 points 62.3%followed by Grok Voice Fast 1.0 in 45.6%Gemini 3.1 Flash Live at 44.7%and GPT Realtime 1.5 at 38.6%.

In An airline company – booking changes, delays, and complicated itineraries – such points 66% for Grok Voice Think Fast 1.0, 64% for Grok Voice Fast 1.0, 40% for Gemini 3.1 Flash Live, and 36% for GPT Realtime 1.5.

A very large gap appears Telecom: program changes, payment disputes, and technical troubleshooting — where grok-voice-think-fast-1.0 he benefits 73.7%while Grok Voice Fast 1.0 scores 40.4%Gemini 3.1 Flash Live 21.9%and GPT Realtime 1.5 21.1%. A 33 percent lead over the next competitor by one stop is no small improvement. That is a property benefit.

Real-Time Consulting With Zero Added Latency

One of the most important design decisions in this model is how reflection is handled. grok-voice-think-fast-1.0 it does thinking behind the scenes, thinking about challenging questions and workflows in real-time without impacting response latency. For AI teams, this is a difficult part to build: traditional reasoning models increase response time because they generate intermediate ‘thinking’ tokens before generating a response. Hiding that calculation in the delay chat budget, while still benefiting from it, requires careful craftsmanship.

The obvious benefit is accuracy without laziness. The xAI team demonstrates this with an edge case: when asked “Which months of the year are spelled with the letter X?”, grok-voice-think-fast-1.0 correctly answers that there is no month that contains the letter X. On the other hand, the competing models confidently and mistakenly answered “February.” This category of error, where the model produces a valid but incorrect answer with high confidence, is very damaging to voice communication because users have no text output to test.

Accurate Data Entry and Read-Back

Workflow capability that is the core of grok-voice-think-fast-1.0 is a structured data capture and read-back. The model can easily collect email addresses, street addresses, phone numbers, full names, account numbers, and other structured data, even if the information is spoken quickly or in a firm voice. It handles speech impairments well and accepts natural corrections in a human-like manner, then reads verified data from the user.

xAI demonstrates this with a concrete example. The caller says: “Yeah, it’s 1410, oh wait, 1450 Page Mill Street. Actually no, that’s Page Mill Road.” The model processes spoken corrections in real time, requesting a search_address a tool with a fixed parameter "1450 Page Mill Rd"and reads the standard address to authenticate the user. For data teams that have spent time building post-call cleanup pipelines to extract structured fields from dirty documents, this native capture and read-back capability represents a logical reduction in complex downstream processing.

The model is battle-tested under the harshest real-world conditions: phone noise, background noise, heavy speech, and general distractions. It natively supports 25+ languages, making it suitable for global deployment in all use cases including customer support, phone sales, appointment booking, and restaurant reservations.

Starlink Deployment: Production at Scale

A very compelling confirmation of grok-voice-think-fast-1.0 it is not a single benchmark but a live deployment. Grok Voice enables full phone sales and customer support functionality for Starlink at +1 (888) GO STARLINK. The numbers xAI discloses in this posting are operationally important: a 20% sales conversion rate (meaning 1 in 5 callers making a sales inquiry buys a Starlink service while on the phone with Grok), 70% independent resolution rate for customer support inquiries with no one in the loop, and one agent working everywhere 28 different tools which includes hundreds of support and sales workflows.

Key Takeaways

- grok-voice-think-fast-1.0 leads the τ-voice benchmark with a score of 67.3%best performing in Gemini 3.1 Flash Live (43.8%), Grok Voice Fast 1.0 (38.3%), and GPT Realtime 1.5 (35.3%).

- The model makes a posteriori assumption with an additional delay of zeroallowing it to think through complex, multi-step workflows in real time without slowing down chat responses.

- Accurate data entry and reading back is a native skillwhich allows the model to capture and validate structured data such as names, addresses, phone numbers, and account numbers even when spoken quickly, with pronunciation, or with mid-sentence corrections.

- The model supports 25+ languages and high volume instrumentationmaking it applicable to all global business use cases including customer support, telephone sales, appointment booking, and restaurant reservations.

- Starlink’s live deployment proves readiness for production at scale: A single Grok Voice agent works across 28+ workflow tools, achieves a 20% sales conversion rate and automatically resolves 70% of customer support questions without anyone in the loop.

Check it out Documents again Official Release. Also, feel free to follow us Twitter and don’t forget to join our 130k+ ML SubReddit and Subscribe to Our newspaper. Wait! are you on telegram? now you can join us on telegram too.

Need to work with us on developing your GitHub Repo OR Hug Face Page OR Product Release OR Webinar etc.?contact us

The post xAI Introduces grok-voice-think-fast-1.0: Top τ-voice Benchmark at 67.3%, High-Performance Gemini, Real-time GPT, and More appeared first on MarkTechPost.